Do you know?

- 92% of security professionals are concerned about the security impact of AI agents across the workforce.

- 87% say AI is increasing the sophistication and success rate of malware, while 77% say generative AI is already part of their security stack.

- 74% are still limiting autonomous AI action in the SOC until explainability improves, even though 96% say AI improves speed and efficiency.

From Darktrace’s The State of AI Cybersecurity 2026 report, one thing is clear:

The conversation is no longer, “Should we use AI in security?”

That decision has already been made.

The real question is whether organizations are deploying AI faster than they are building the controls, visibility, and decision-making discipline needed to manage it.

That gap matters.

Because if 92% are worried about AI agents and that they need to understand how defensive AI makes decisions before they can trust it, then the issue is no longer adoption. It is governance, explainability, and operational readiness.

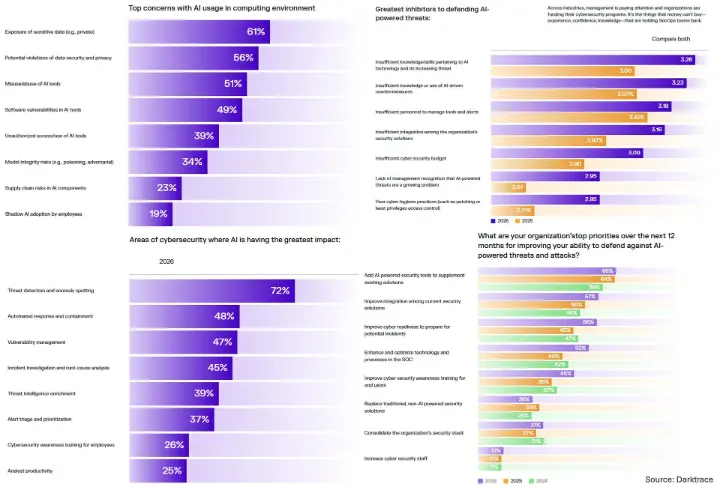

The report also shows where AI is already having the greatest impact in cybersecurity:

• Threat detection and anomaly spotting: 72%

• Automated response and containment: 48%

• Vulnerability management: 47%

• Incident investigation and root-cause analysis: 45%

• Threat intelligence enrichment: 39%

And over the next 12 months, organizations say their top priorities are:

• Adding AI-powered security tools to supplement existing solutions: 65%

• Improving integration among current security solutions: 57%

• Improving cyber readiness to prepare for potential incidents: 56%

• Enhancing and optimizing technology and processes in the SOC: 52%

• Improving cybersecurity awareness training for end users: 45%

Our takeaway for AI and automation leaders: the next competitive edge is not just adding more AI. It is designing systems where humans can trust, supervise, and intervene when AI acts at scale.

AI without guardrails creates speed.

AI with trust creates resilience.

Curious how others see this: are most companies underinvesting in AI security governance while racing ahead on deployment?

#AICybersecurity #AIAgents #Cybersecurity #Automation #AIStrategy #EnterpriseAI

What are the biggest AI cybersecurity risks in 2026?