Key Takeaways

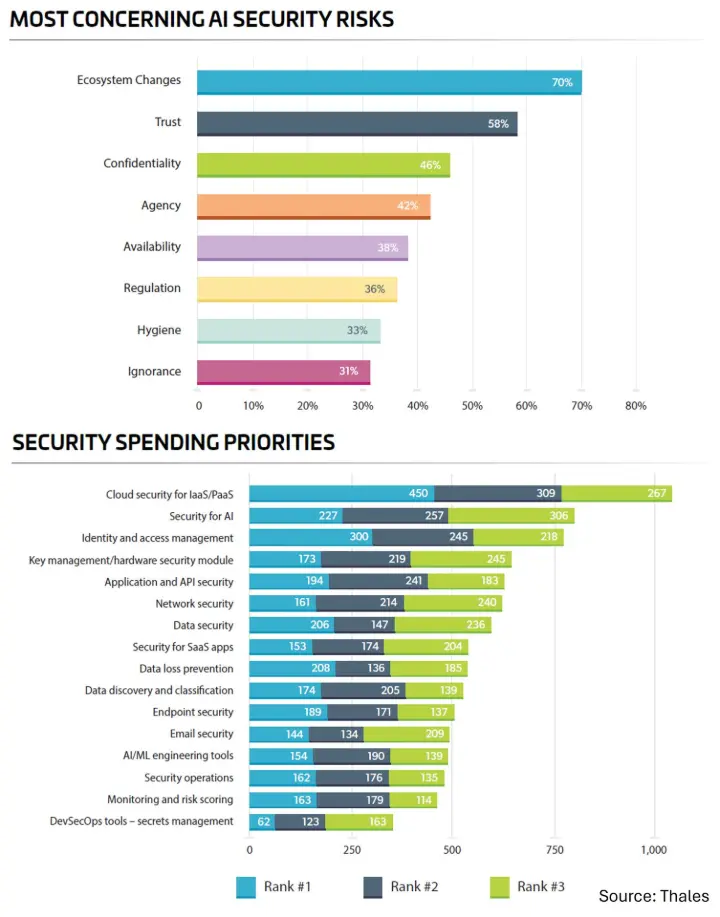

- 70% say the pace of change in the AI ecosystem is now the top AI security risk.

- 61% report AI applications are already being targeted, and 59% have seen deepfake attacks.

- Only 34% have complete knowledge of where their data is stored, and only 47% of sensitive cloud data is encrypted.

We often talk about AI risk as if it is mainly a model problem. It is not. It is increasingly a data access, identity, and control problem.

The Thales 2026 Data Threat Report makes that uncomfortably clear: cloud storage, cloud apps, and cloud management infrastructure are now the top attack targets, while credential theft is rising fast.

And in spite of all the worries over the risks associated with autonomous AI agents (see OpenClaw), human error is still the leading cause of breaches at 28%.

The most important takeaway is this:

In the agentic era, AI behaves less like a tool and more like a highly capable insider with speed, reach, and permissions. If your organization cannot clearly see where data lives, who can access it, and how it is protected, adding more AI may simply increase your exposure and vulnerabilities.

The next phase of AI transformation will not be won by the companies with the most agents.

It will be won by the ones with the best data discipline and security posture.

Are most organizations moving fast on agentic AI while underinvesting in the basics of data security?

#AI #Cybersecurity #DataSecurity #AgenticAI #Automation #EnterpriseAI

What are the biggest AI data security risks enterprises should worry about in 2026?